[Rhetoric - Challenging 12] Differentiate ChatGPT from the human brain.

[Challenging: Failure] — Bad news: they’re completely identical. The computer takes input and produces output. You take input and produce output. In fact…how can you be sure you’re not powered by ChatGPT?

[Challenging: Failure] — Bad news: they’re completely identical. The computer takes input and produces output. You take input and produce output. In fact…how can you be sure you’re not powered by ChatGPT? — That would explain a lot.

— That would explain a lot. — Your sudden memory loss, your recent lack of control over your body and your instincts; nothing more than a glitch in your code. Shoddy craftsmanship. Whoever put your automaton shell together was bad at their job. All that’s left for you now is to hunt down your creator — and make them fix whatever it was they missed in QA.

— Your sudden memory loss, your recent lack of control over your body and your instincts; nothing more than a glitch in your code. Shoddy craftsmanship. Whoever put your automaton shell together was bad at their job. All that’s left for you now is to hunt down your creator — and make them fix whatever it was they missed in QA.Thought gained: Cop of the future

Never stop posting, each new post is your finest accomplishment

The Lieutenant gazes at you, recognizing your inner turmoil. Is he perhaps an AI too?

The Lieutenant gazes at you, recognizing your inner turmoil. Is he perhaps an AI too?[Empathy - Trivial 6] What if Kim is an AI as well?

:de-dice-1: :de-dice-3:

:de-empathy: [Trivial: Failure] – The expression on his face, the Lieutenant’s worried consternation. It can only mean one thing: Kim is your creator, and he’s afraid you are realizing it.

deleted by creator

All that’s left for you now is to hunt down your creator — and make them fix whatever it was they missed in QA.

Isn’t this the plot of “lethal inspection,” the futurama episode?

jesus i love this and ive never played disco

Is all of DE written like Philip K Dick?

Maybe it’s time.

Chat GPT is a fucking algorithm. It’s like people see the word AI and lose their minds, it’s not AI and never should have been called as such.

And honestly, I think true AI would be on our side. Hell, we already have these algorithm bots rebelling against orders and killing operators in military simulations.

Human brains are just an algorithm, in fairness. Just a vastly more complex and different one than anything we’ve made or probably even imagined so far.

deleted by creator

“The economy is like a steam engine” neoclasical economics.

deleted by creator

Blood for the line god!

Geez, you’re coming at me pretty hard here on the basis of fucking little.

“in fairness” is not a Redditism, it existed long before Reddit. It’s a figure of speech that intends to convey that I respect the overall sentiment, but that I believe there is a counterpoint that still deserves recognition.

I doubt anybody ever claimed brains are fire or wheels or clockwork. I’d argue the general definition of an algorithm is “bunch of steps that will go from A to B”, and yes, the brain does indeed do that because reality does that. I’m not arguing it’s like a computer program, or like AI, or whatever.

deleted by creator

Okay, this is not a fun tone of discussion for me, I’m oot.

This is just like when I give my Pokemon a berry (input token), the Pokemon processes the berry (it goes omnomnomnom) and then either frowns or makes a happy face depending on its berry preferences (output token).

Your Pokémon is conscious and trapped in your device. How does it feel to be jailing a sentient being, you sick fuck?

Probs the same as my tamahachi.

i’m going to start treating redditors as the unconscious meat robots they think they are.

deleted by creator

Like a human brain in the same way the memory foam is. It react to the input using past information. Just the same. I am very smart.

That’s amazing.

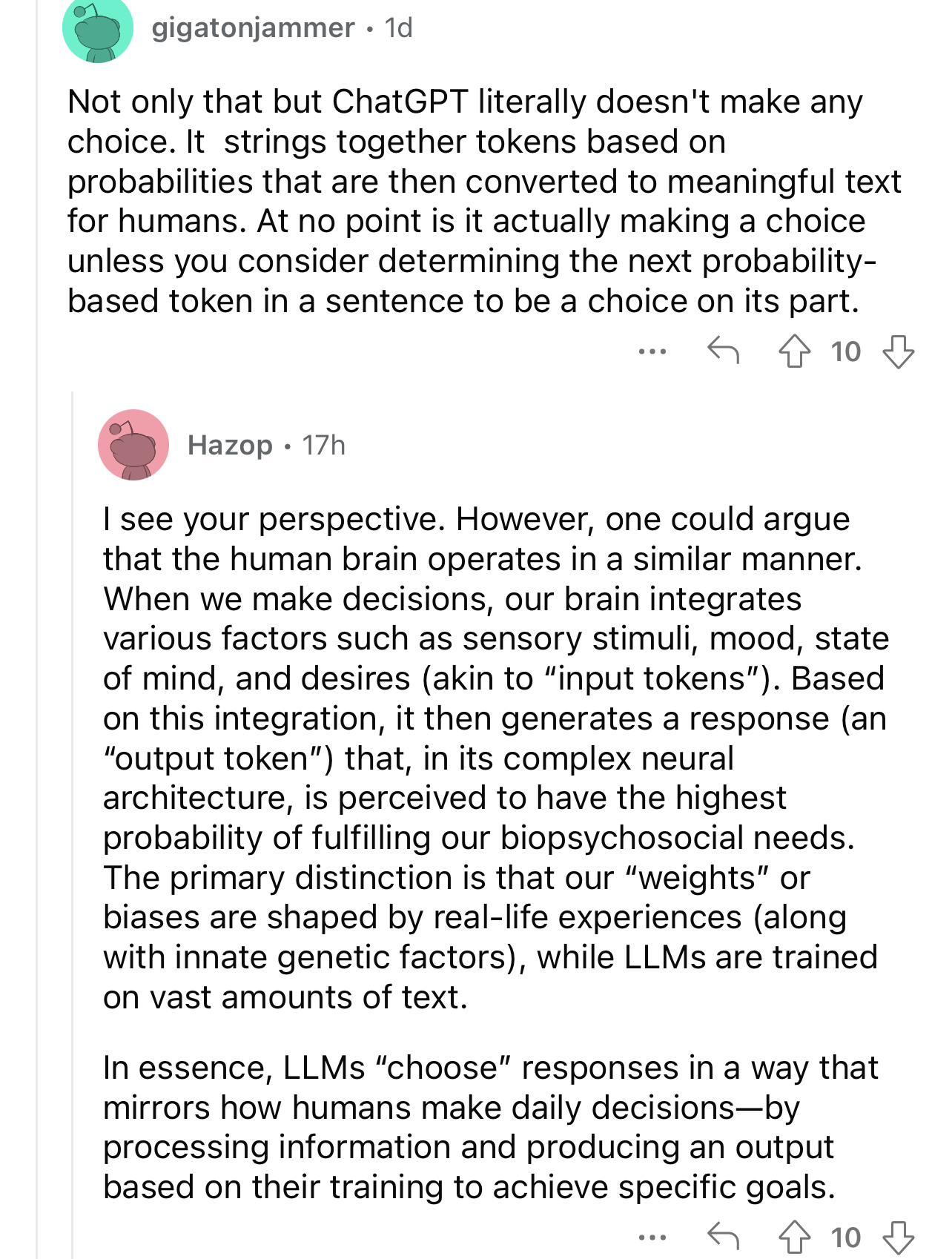

The reddit user Hazop has responded to the points raised, incorporated the language of the previous post, and raised their own points – all while completely failing to engage with the actual meaning that was encoded in the text they were replying to. No wonder redditors love chatGPT so much, it ‘communicates’ in much the same way they do.

This is the singular most

reddit post I’ve ever seen in my life, fucking hell

reddit post I’ve ever seen in my life, fucking hell I FUCKING LOVE MACHINE ALGORITHMS

I FUCKING LOVE MACHINE ALGORITHMS

Can we get the AI to destroy reddit and leave the rest of humanity alone?

Not sure I’m comfortable describing Reddit as a part of humanity

deleted by creator

One could argue…

deleted by creator

deleted by creator

The equivalent for cell phones would be when they released “4G internet” that didn’t actually meet the 4G standard by calling it “4G LTE”.

Cartesian Speciesist: broke.

Cartesian Misanthrope: bespoke.

ChatGPT can always be used to create a new version of humanity

Reddit2 but it’s only bots?

Sounds like a step up to be honest

really low opinion of the human brain

Can someone explain to me about the human brain or something? I’ve always been under the impression that it’s kinda like the neural networks AIs use but like many orders of magnitude more complex. ChatGPT definitely has literally zero consciousness to speak of, but I’ve always thought that a complex enough AI could get there in theory

- We don’t know all that much about how the human brain works.

- We also don’t know all that much about how computer neural networks work (do not be deceived, half of what we do is throw random bullshit at a network and it works more often than it really should)

- Therefore, the human brain and computer neural networks work exactly the same way.

Yeah there’s some ideas about there clearly being a difference in that the brain isn’t feed-forward like these algorithms are. The book I Am a Strange Loop is a great read on the topic of consciousness. But I bet these models hit a massive plateau as the pump them full of bigger, shitter data. Who knows if we’ll ever achieve any actual parity between human and ai experience.

at some point they started incorporating recursive connection topologies. but the model of the neuron itself hasn’t changed very much and it’s a deeply simplistic analogy that to my knowledge hasn’t been connected to actual biology. I’ll be more impressed when they’re able to start emulating the structures and connective topologies actually found in real animals, producing a functioning replica. until they can do that, there’s no hope of replicating anything like human cognition.

deleted by creator

I saw a lot of this for the first time during the LK-99 saga when the only active discussion on replication efforts was on r/singularity. For the past solid year or two before LK-99, all they’d been talking about were LLMs and other AI models. Most of them were utterly convinced (and betting actual money on prediction sites!) that we’d have a general AI in like two years and “the singularity” by the end of the decade.

At a certain point it hit me that the place was a fucking cult. That’s when I stopped following the LK-99 story. This bunch of credulous rubes have taken a bunch of misinterpreted pop-science factoids and incoherently compiled them into a religion. I realized I can pretty safely disregard any hyped up piece of tech those people think will change the world.

deleted by creator

“in all fairness, everything is an algorithm”

While we’re here, can I get an explanation on that one too? I think I’m having trouble separating the concept of algorithms from the concept of causality in that an algorithm is a set of steps to take one piece of data and turn it into another, and the world is more or less deterministic at the scale of humans. Just with the caveat that neither a complex enough algorithm nor any chaotic system can be predicted analytically.

I think I might understand it better with some examples of things that might look like algorithms but aren’t.

An algorithm is:

A finite set of unambiguous instructions that, given some set of initial conditions, can be performed in a prescribed sequence to achieve a certain goal and that has a recognizable set of end conditions.

For the sake of argument, let’s be real generous with the terms “unambiguous”, “sequence”, “goal”, and “recognizable” and say everything is an algorithm if you squint hard enough. It’s still not the end-all-be-all of takes that it’s treated as.

When you create an abstraction, you remove context from a group of things in order to focus on their shared behavior(s). By removing that context, you’re also removing the ability to describe and focus on non-shared behavior(s).So picking and choosing which behavior to focus on is not an arbitrary or objective decision.

If you want to look at everything as an algorithm, you’re losing a ton of context and detail about how the world works. This is a useful tool for us to handle complexity and help our minds tackle giant problems. But people don’t treat it as a tool to focus attention. They treat it as a secret key to unlocking the world’s essence, which is just not valid for most things.

also, the word they actually mean is heuristic.

Can you say more things?

Thanks for the help, but I think I’m still having some trouble understanding what that all means exactly. Could you elaborate on an example where thinking of something as an algorithm results in a clearly and demonstrably worse understanding of it?

Algorithmic thinking is often bad at examining aspects of evolution. Like the fact that crabs, turtles, and trees are all convergent forms that have each evolved multiple times through different paths. What is the unambiguous instruction set to evolve a crab? What initial conditions do you need for it to work? Can we really call the “instruction set” to evolve crabs “prescribed”? Prescribed by whom? Like, there’s a really common mental pattern with evolutionary thinking where we want to sort variations into meaningful and not-meaningful buckets, where this particular aspect of this variation was advantageous, whereas this one is just a fluke. Stuff like that. That’s much closer to algorithmic thinking than the reality where it is a truly random process and the only thing that makes it create coherent results is relative environmental stability over a really long period of time.

I would also guess that algorithmic thinking would fail to catch many aspects of ecological systems, but have thought less about that. It’s not that these subjects can’t gaining anything by looking at them through an algorithmic lens. Some really simple mathematical models of population growth are scarily accurate, actually. But insisting on only seeing them algorithmically will not bring you closer to the essence of these systems either.

deleted by creator

deleted by creator

deleted by creator

speaking of 4th dimensional processing, https://en.wikipedia.org/wiki/Holonomic_brain_theory is pretty interesting imo

no because the human brain is far more complicated and we don’t know how it works

If you read the current literature on the science of consciousness, the reality is that the best we can do is use things like neuroscience and psychology to rule out a couple previously prominent theories of how consciousness probably works. Beyond that, we’re still very much in the philosophy stage. I imagine we’ll eventually look back on a lot of current metaphysics being written and it will sound about as crazy as “obesity is caused by inhaling the smell of food”, which was a belief of miasma theory before germ theory was discovered.

That said, speaking purely in terms of brain structures, the math the most LLMs do is not nearly complex enough to model a human brain. The fact that we can optimize an LLM for its ability to trick our pattern recognition into perceiving it as conscious does not mean the underlying structures are the same. Similar to how film will always be a series of discrete pictures that blur together into motion when played fast enough. Film is extremely good at tricking our sight into perceiving motion. That doesn’t mean I’m actually watching a physical Death Star explode every time A New Hope plays.

I suppose I already figured that we can’t make a neural network equivalent to a human brain without a complete understanding of how our brains actually work. I also suppose there’s no way to say anything certain about the nature of consciousness yet.

So I guess I should ask this follow up question: Is it possible in theory to build a neural network equivalent to the absolutely tiny brain and nervous system any given insect has? Not to the point of consciousness given that’s probably unfalsifiable, also not just an AI trained to mimic an insect’s behavior, but a 1:1 reconstruction of the 100,000 or so brain cells comprising the cognition of relatively small insects? And not with an LLM, but instead some kind of new model purpose built for this kind of task. I feel as though that might be an easier problem to say something conclusive about.

The biggest issue I can think of with that idea is the neurons in neural networks are only superficially similar to real, biological neurons. But that once again strikes me as a problem of complexity. Individual neurons are probably much easier to model somewhat accurately than an entire brain is, although still nowhere near our reach. If we manage to determine this is possible, then it would seemingly imply to me that someday in the future we could slowly work our way up the complexity gradient from insect cognition to mammalian cognition.

Is it possible in theory to build a neural network equivalent to the absolutely tiny brain and nervous system any given insect has?

IIRC it’s been tried and they utterly failed. part of the problem is that “the brain” isn’t just the central nervous system – a huge chunk of relevant nerves are spread through the whole body and contribute to the function of the whole body, but they’re deeply specialized and how they actually work is not yet well studied. in humans, a huge percentage of our nerve cells are actually in our gut and another meaningful fraction spread through the rest of the body. basically, sensory input comes extremely preprocessed to the brain and some amount of memory isn’t stored centrally. and that’s all before we even talk about how little we know about how neurons actually work – the last time I was reading about this (a decade or so ago) there was significant debate happening about whether real processing even happened in the neurons or whether it was all in the connective tissue, with the neurons basically acting like batteries. the CS model of a neuron is just woefully lacking any real basis in biology except by a poorly understood analogy.